Resistors

Power Rating

The power rating of a resistor is one of the more hidden values. Nevertheless it can be important, and it's a topic that'll come up when selecting a resistor type.

Power is the rate at which energy is transformed into something else. It's calculated by multiplying the voltage difference across two points by the current running between them, and is measured in units of a watt (W). Light bulbs, for example, power electricity into light. But a resistor can only turn electrical energy running through it into heat. Heat isn't usually a nice playmate with electronics; too much heat leads to smoke, sparks, and fire!

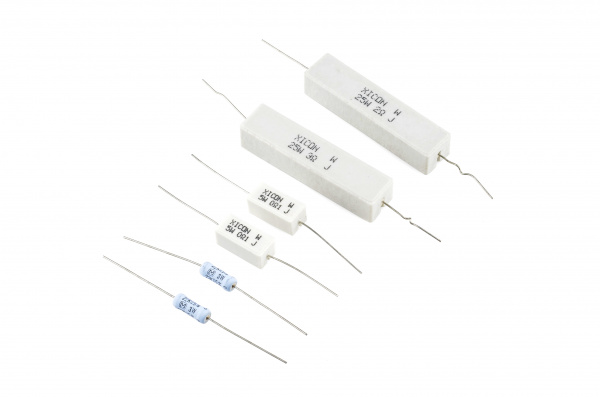

Every resistor has a specific maximum power rating. In order to keep the resistor from heating up too much, it's important to make sure the power across a resistor is kept under it's maximum rating. The power rating of a resistor is measured in watts, and it's usually somewhere between ⅛W (0.125W) and 1W. Resistors with power ratings of more than 1W are usually referred to as power resistors, and are used specifically for their power dissipating abilities.

Finding a resistor's power rating

A resistor's power rating can usually be deduced by observing its package size. Standard through-hole resistors usually come with ¼W or ½W ratings. More special purpose, power resistors might actually list their power rating on the resistor.

The power ratings of surface mount resistors can usually be judged by their size as well. Both 0402 and 0603-size resistors are usually rated for 1/16W, and 0805's can take 1/10W.

Measuring power across a resistor

Power is usually calculated by multiplying voltage and current (P = IV). But, by applying Ohm's law, we can also use the resistance value in calculating power. If we know the current running through a resistor, we can calculate the power as:

Or, if we know the voltage across a resistor, the power can be calculated as: