Data Types in Arduino

Time and Space

The processor at the heart of the Arduino board, the Atmel ATmega328P, is a native 8-bit processor with no built-in support for floating point numbers. In order to use data types larger than 8 bits, the compiler needs to make a sequence of code capable of taking larger chunks of data, working on them a little bit at a time, then putting the result where it belongs.

This means that it is at its best when processing 8-bit values and at its worst when processing floating point. To demonstrate this fact, I've written a simple Arduino sketch which does some very simple math and can easily be altered to use different data types to perform the same calculations.

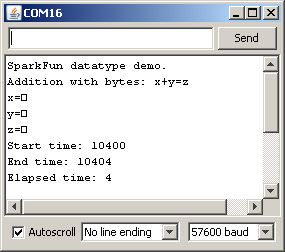

First, up, let's dump the code as-is into an Arduino Uno and see what results we get on the serial console.

Okay, lots of stuff there. Let's take things a bit at a time.

First, if you're following along, check the compiled size of the code. For addition with bytes, we end up with 2458 bytes of code. Not a lot, and, frankly, most of that is taken up with the serial output stuff. This data point will become important later on, however.

Next, let's look at the serial port output. What's the deal with the squares instead of a number for the printed variable values? That happens because the Serial.print() function changes the way it operates based on the type of data which is passed to it. For an 8-bit value (be it a char or byte), it will simply pipe out that value, in binary. The serial console is then going to try to interpret that data as an ASCII character, and the ASCII characters for 1, 2, and 3 are 'START OF HEADING', 'START OF TEXT', and 'END OF TEXT'. Hmm. Not particularly useful, are they, nor easy to display in one character? Hence the square: the serial console is throwing up its hands and saying, 'I don't know how to print this, so I made a square for you'. So, lesson one in Arduino datatype finesse: to get the decimal representation of an 8-bit value from Serial.print(), you must add the DEC switch to the function call, like this:

Serial.print(x, DEC);

Finally, observe the 'Elapsed time' measurement. Discounting the inaccuracies from using the micros() function to measure elapsed time, which we'll do on all these tests, so we should get a very good RELATIVE measure of the time required for operations, if not a good absolute measure, you can see that adding two 8-bit values requires approximately 4 microseconds of the processor's time to achieve.